Things I still wouldn’t delegate to AI

When it comes to AI, I consider myself a “skeptical optimist.” I think it has evolved a long way. I even (controversially) put it in my testing pipeline. But sometimes, when I see how others use it, I wonder: are we going too far?

I’m not talking just about people simply handing over their email inbox to OpenClaw. I’m referring to major incidents like how “AWS suffered ' at least two outages’ caused by AI tools.”

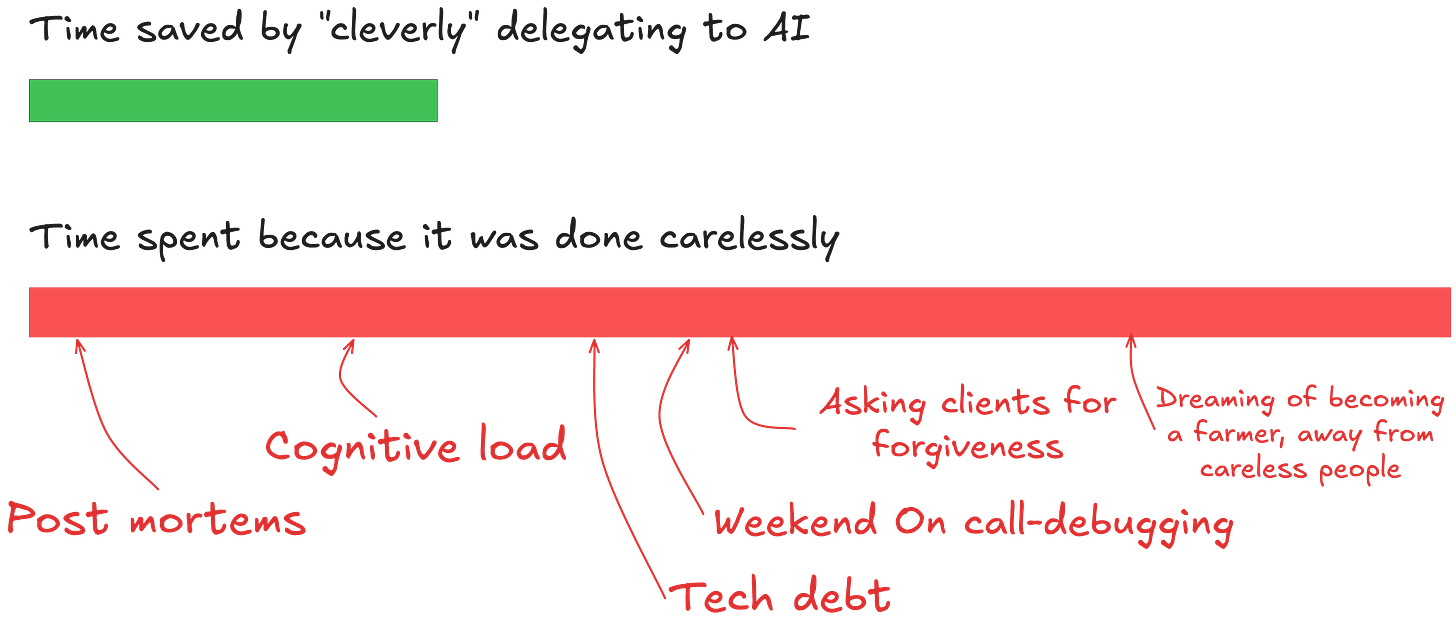

Code is cheap now, and we can fully delegate it to AI, but coding is only a small part of our jobs. The others, like handling incidents caused by AI code, are not.

In all the situations below, you'll notice a pattern: people think “AI can handle most of it, so why not all of it?” and here’s how that leads to disaster.

Hiring

The misuse of automation in hiring predates the rise in LLMs.

Eleven years ago, I applied for a Django role and got rejected within two minutes at 01 AM, because I needed to know more about “Python” for the job. The email seemed to be written by a person. I submitted a new application with just one word added and received an interview invitation… The rejection was because the scanner didn’t find the word ‘Python’.

The main problem with companies that pull “clever” stunts like these is that they exclude great candidates. Not only that, but people will notice your flaws and share them publicly on platforms like Glassdoor, which can tank your reputation.

Some argue that automation is necessary because applicant volume can become overwhelming. I disagree. During the COVID hiring surge, I reviewed over 1,000 resumes a year and never considered automating screening.

The reason why you shouldn't automate hiring is that it is the most important thing you do.

Hiring well is the most important thing in the universe. […] Nothing else comes close. So when you’re working on hiring […] everything else you could be doing is stupid and should be ignored!

— Valve New Employee Handbook

Even with 300 applicants each month, you can review all the resumes in less than an hour by using better judgment than AI. That one hour spent is more valuable than dismissing a potentially great candidate. Finding the right candidate early also reduces the hours spent on interviews.

Now that people are embedding LLMs into the hiring process, the situation has worsened. I see many pitches for tools that claim to be better at evaluating candidates’ interview performance than a human, which is simply absurd.

Hiring is a human process: you need to understand not only what they say that makes sense, but also what excites and motivates them to see if they’ll be a good fit for the role.

You can’t measure qualities like enthusiasm and soft skills with AI. It will only accept what the candidate says at face value.

A candidate might claim they are passionate about working with bank accounting software in Assembly at your Assembly bank firm, but are they really?

Code reviewing

From my personal experience with AI review tools like CodeRabbit, Claude, and Gemini, I've noticed that a pull request with 12 issues results in 12 comments, but only about 6 are actual problems. The rest tend to be just noise or go unaddressed.

This doesn't mean those tools are useless. Letting them do an initial pass is very helpful, and some humans wouldn't catch some of the issues they find, especially the deep logical problems.

The issue with automated review tools is that they are becoming the de facto gatekeepers for deploying code to production, leading to future outages and a low-quality codebase. The inmates have taken over the asylum, and we now have AI reviewing code generated by AI.

Review tools are very focused on checking whether your PR makes logical sense, such as whether you forgot to add auth behind a route, but they can't, for example, judge whether your code worsens the codebase.

They can't raise the bar, which is the best part of human reviews. Every time we create or review a PR, it's a chance to learn how to become a better engineer and to leave the codebase in a better state than we found it.

Comments from peers like “you are duplicating logic, you should DRY these components” encourage us to review our own code and improve as engineers. Relying only on AI review takes away that chance.

Most incidents I observe happen because AI struggles to evaluate second-order effects; it overlooks the Chesterton fence. For example, if you try to delete or change a downstream parameter, like a parameter needed and was removed by an LLM, which wasn’t caught by linting. This reflects a limitation of current models: they can't review your code across repos.

Technical Writing

I'm tired of reading AI-generated writing: it just doesn't respect the reader's time. I see many AI-produced texts that could be shortened by a quarter without losing any important information.

Reading emails, meeting notes, or technical documents filled with emoji spam and strange analogies (“it's not X, it's Y”) is tiring. When I see the words “Executive Summary,” I often hesitate to read it.

I would have written a shorter letter, but I did not have the time.

— Blaise Pascal

There is power in simplicity and in respecting your reader's time. Most of my blog posts are cut by 50% just before I publish them.

Most people I know who use AI for communication do so because they believe their writing is not good.

But honestly, the goal of communication isn't grammar skills but to get the point across.

Good grammar is often overrated anyway. One of my favorite documents is the leaked MrBeast memo PDF, which is full of grammatical and punctuation errors but clearly communicates its message through a “braindump”, much better than any LLM ever could.

Consumer research

When you ask an LLM about your roadmap, you're likely querying what countless other companies with very different issues have already tried. The AI relies on patterns from its training data, and in my experience, those patterns tend to be too generic compared to the insights of a seasoned domain expert.

If your software is meant for hospital accountants, do you think they take time to blog about the frustrations of their workflow? The knowledge is stored in their minds, and you need to extract it. This vital knowledge is never documented and thus never accessible to an LLM.

I spent three years researching and working on accessibility for nonverbal individuals. If I ask the AI about what this industry lacks, it will start discussing the need for better UX solutions (there are countless papers on this, I even naively wrote one). Still, I saw multiple companies enter the market with great UX products only to crash and burn.

After a while, I realized that poor UX apps still dominate adoption because these companies invest millions in lobbying, partnerships with insurance companies, and training, which is the thing no one talks about.

Management tools

I get many messages from bots on Reddit and LinkedIn about AI management tools, but as I mentioned before, they lack context. The worst part is that they think they can make judgments with the limited context they have.

Here’s an example of a feedback tool output:

“This engineer sucks, they do 40% fewer PRs than the median, I marked him as an underperformer… I also told your boss, HR and CTO about it, better do something!”

- Some tool with a fancy name and a “.io” domain

But yet, that engineer is one of the best I have worked with.

The issue is that they try to outsmart the manager, which leads lazy managers to use the AI's suggestions as an excuse, resulting in poorly thought-out feedback because “The computer says no.”

So, what should you delegate to AI?

Think of the current LLMs as an “added value tool”, not a product, and definitely not an expert.

Most of what I wrote above is problematic because it overestimates what LLMs can do and enables them to operate unsupervised. You can't go back in time after AI makes a mistake, and there are no guardrails once a mistake is made.

I received a lot of criticism for my post about using AI to select E2E tests in a PR pipeline. Yes, it sounds crazy, but this is the “added value” part: if the AI fails at selecting the right test, we will catch it before deployment. The value provided is that having it is better than having no pre-checks at all.

Before giving AI control, ask how resilient our system is when (not if) the AI screws up, and ensure you have stronger safety nets before delegating completely.

I'm fully aligned with your thoughts. Not having the time to invest too much in code quality is the same curse that AI brings to use but just shifted to other areas of SDLC.